In part 1 of this 3 part series, Baris Oztop, a Computer Science Graduate student at Technical University of Munich, Germany (TUM) covered the various SAP Portal analytics products on the market. In part 2 we will dive into specific features which can make or break your decision when choosing the right SAP Portal statistics gathering tool:

Part 1 covered: Overview of SAP Portal Analytics Products

Part 2: SAP Portal Analytics Product Comparisons Continued

Part 3 will conclude the SAP Portal analytics research and cover:

(The full pdf version of “A Complete Overview of SAP Portal Analytics Tools” coming soon.)

On with part 2 …

Previously visited pages might be cached into the visitor’s browser cache or user’s internet

service provider might cache them into their proxy servers to speed up upcoming visits. There are also cases when visitors behind a corporate network running proxy servers or accessing Internet via proxy servers. In these cases, those upcoming visits will be replied from the cache instead of portal’s server itself.

This is a problem if you are using server log files for analytics, because those accesses won’t reach to the portal server, as a result there won’t be any log entries for them.

Page tagging technique uses an invisible image to send the visitor data as parameters to this image request, and this image can be cached too. However, vendors avoid this by passing a unique number (e.g. the current date-time) along with the image request within JavaScript code. This will show each image request as a new request to the server. Hence, gathered visitors data will be collected without interfering of caching mechanism.

Even though pages are cached, analytics data are always sent to the data-gathering

servers of Google as long as portal visitors have access to the internet. Because, the

page tag in the cached page will always generate a new request to the Google’s servers

every time it’s loaded. Therefore, your reports won’t be affected from pages called from proxies or caches instead of portal’s server.

If you preferred to use Urchin without page tagging, pages requested from cache instead of server won’t provide any entry into the server log files. This situation will skew your metrics on the analytics reports. On the other hand, Urchin might overcome this issue with its page tagging method. It’s the same idea as Google Analytics apply, but the data collected by page tags are written to the server log files as well, instead of transferred to a separate server. As a result, if your analytics reports will be affected or not from the cached pages depends on which method you choose to use with Google Urchin.

Webtrends’ page tagging code is able to send analytics data in case of cached views.

SiteCatalyst page tagging code also avoids caching problem o by generating random

numbers on its image request parameters for each visit.

Click Stream records the navigation activities on the portal to prepare analytics reports. Therefore, caching which might be done by user’s web browser or proxy servers won’t avoid gathering analytics data.

With page tagging method, you add additional JavaScript code into your portal pages as

mentioned before. So, we expect that our page is loaded correctly, and analytics page tag

collected the necessary data from the visitor’s browser. However, this might not be the case.

One reason for it is another problematic script on the page, which stops the browser’s script engine; therefore page tagging code won’t be executed. Another reason is partially loaded pages. That happens when loading a page is interrupted due to connection interruptions or user can simply click the “stop” button of the browser during loading or visitor’s familiarity to the pages may result navigating quickly from different pages of the portal before page’s content is fully loaded. Therefore, page tags may submit usual analytic data as if user viewed the page or user viewed the page content but page tag might not submit any analytics data. These situations are also related to where you placed the page tag code on the portal pages. You don’t have to worry about this if you are using server log files for analytics, because they record failures as well as normal accesses into the log files after a request reached to the server.

Google suggests adding tracking code right before the closing tag for your

pages to avoid problematically loaded pages sending analytics data in case of

interruptions.

If you are using Uchin with page tagging method, Urchin’s online documentation suggest to put the page tagging code after the META tags in the HEAD section. But, without page tagging method, you don’t need to modify any of your pages.

Webtrends recommends to place JavaScript tag before the tag for your web

page to avoid problematically loaded pages to send analytics data in case of

interruptions. Webtrends tagging code also interacts with the HTML Meta tags locating on pages and includes this into reports in addition to visitor’s related information.

SiteCatalayst implementation guide suggest placing JavaScript tag onto a top position

inside the body tag to able to record the visits resulting from a quick navigation between pages before page is fully loaded.

Click Stream’s page tag is inserted to the framework page of the portal, and some of the collected data via user’s browser such that user’s type of browser and screen resolution. Problematically loaded pages might disturb collection of such information. On the other hand, each requested pages already started to be tracked on the Java server side. Therefore, problematically loaded pages won’t result a total loss of tracking data.

You might have already realized that changing the analytics provider of your portal might be laborious if you are using page tags. This is because, you have to replace or omit the previously added page tags all together. Besides, you may consider using earlier analytics data with the new vendor as a common scenario. So, you should export your earlier data in a way that your new provider can parse it. You could overcome this issue, if you were using log file analytic software. Because, server log files are in one of the available standard formats, which can be parsed with many server log file analyzer software. Therefore, providing earlier log files to new software makes you to analyze earlier data along with the currently generated data.

For the page tagging vendors, reprocessing the earlier data or other vendors’ data won’t be

possible. However, provided report export features will allow you to export the selected analytics reports, which can be used later on according to your scenario.

Currently GA offers three file formats export your analytic reports. These are PDF (beta), comma separated CSV and tab separated TSV with a limitation on the exported number of rows on the reports.

Urchin supports three different formats i.e. plain text, XML and CSV to export reports. Report limitation, number of rows that can be exported and displayed on the Urchin’s user interface, can be modified. As we mentioned before Urchin is able to use page tagging method, and write the analytics data into the server log files. Using Urchin with standard log files instead of page tagging method won’t limit you, if you prefer to change your analytics vendor again another log file processing vendor later.

Webtrends offers Microsoft Word, PDF, CSV options and a specialized database

exporting option to use with Webtrends SmartReports, which is a tool to create reports to be viewed in Excel.

SiteCatalyst supports PDF, HTML and CSV export options in its reports.

ClickStream supports CSV export with three different delimiters, which are comma,

semicolon, and tab. Additionally, you can take screenshots of the charts available on the reports in JPG or PNG format.

Dependency on Cookies and JavaScript might result some issues. All the available web

browsers allow their users to enable or disable the given JavaScript support by them. This is a situation where page tagging cannot deal with, therefore it results untracked visits. Also, as we mentioned earlier, problematically loaded pages might skew your analytics reports.

Users are also able to remove their cookies whenever they want, additionally some antispyware applications and firewalls can also block cookies. Such applications’ and browsers’ sensitivity to third-party cookies are much higher than first-part cookies; therefore if your analytics provider uses third-party cookies, their rejection rate is much higher than first-party cookies. In those cases, such visitors will be analyzed as a new visitor, which will skew your metric in your analytics reports.

Page tagging solutions use a single pixel invisible image to transfer the collected information from visitor’s browser to the data collection server along with parameters to this image request. This is another situation where page tagging won’t work, if visitors disable loading images on their browser.

Google Analytics depends on your browser’s JavaScript and image support, as well as

first-party cookies. Disabling JavaScript or image support of the browser or a problem

resulted from another JavaScript code on the web page cause stopping the JavaScript

engine of the visitor’s web browser.

Application of Urchin’s page tagging method is similar to the Google Analytics, and it

allows you to use Google Analytics along with Urchin. Only difference is while Google

Analytics sends the analytics data to the Google’s data-collection servers, Urchin makes the portal’s server write the analytics data into server’s log file. Then, Urchin can parse the log files and create the reports. Mentioned problems resulted from Cookies, JavaScript and Image can skew your analytics report if you choose to use Urchin with page tagging method. Using Urchin with log files without any page tagging information might deal those problems, but Urchin’s reporting features will be more limited.

Webtrends Analytics both versions, On Demand and On Premises, use page tagging

method to collect visitors’ activity. While On Demand is offered as hosted solution, you can use On Premises on your server and use the software named SmartSource Data Collector (SDC) to process the tagging information. Dependency on JavaScript, firstparty cookies and image still may results the problems that we mentioned. Because, both options still use page tagging even you can host the data collection server on your server.

SiteCatalyst uses page tagging method. It sends the collected visitor information through an invisible image request to the SiteCatalyst’s data collection servers. Dependency on JavaScript, first-party cookies and image still may results the problems that we mentioned. SiteCatalyst offers a server-side created image tag implementation without using JavaScript to avoid problems resulting from JavaScript.

Click Stream uses page tagging to record the user activities as well. The main

differences from other vendors are, first, instead of sending the collected visitor

information via parameters to a invisible image request, it directly collects the analytics data to user defined database via the installed Click Stream software package, second, it doesn’t use any cookies at all to keep track the earlier visits of the user and redirected page. User related information is gathered from SAP’s User Management Engine (UME) API. This also allows Click Stream to keep track the same user on different devices.

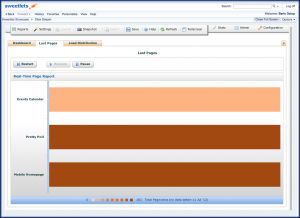

Click Stream SAP Portal analytics real time report

It might be great interest to see real-time reports about your portal. Such reports don’t only give real-time updated metrics, but also may answer the questions like which pages are currently viewed by whom, which users are currently logged in to your portal, and even you can see load distributions of your servers to analyze the bottlenecks. Server log files are written real time, so a software might take advantage of this to reflect real time reports. On the other hand, page tags are also able to send the collected data from visitor’s browser to the data collection servers in real time. However, many page tag based analytics vendor don’t do this due to resource consumption i.e. continuously collecting data from all clients is an expensive scenario.

Due to great amount of collected information for all the Google Analytics users and

geographical propagation of the data collection servers, reports are updated hourly with the analytics data remained from 3 to 4 hours earlier. In some cases, due to interruption on transferring log files to Google servers, it might take 24 hours to reflect changes on your reports. For the portal analytics this might be the main concern for you. On the other hand, Google Analytics’ offers some statistics in real-time i.e. the number of active visitors on the pages. However, this feature is still in beta.

With or without page tagging option, Urchin analyzes the server log files to generate

analytics report. It offers a scheduler that can be set hourly, daily, weekly or monthly to process the server log files. You can also make the analyzes on demand by clicking a

button on Urchin’s configuration user interface. Google Urchin doesn’t offer any real-time reporting.

Some of the Webtrends’ metrics (Page Views, Visits, Page Views per Visit, Visitors, and New Visitor) are updated hourly.

Omniture SiteCatalyst claims that their reports are real-time.

Click Stream offers real time reporting for last visited pages and load distribution.

Setup and Implementation Costs

In Part 3: SAP Portal Analytics Product Comparisons we will continue with Baris’ research and close off his findings.

Should you have any questions or wish for some additional information into gathering statistics (user history, page view details, etc) for your SAP NetWeaver Portal, please contact us at or leave a comment below.

We wish to thank Baris Oztop for his significant amount of time he put into providing such a great resource on this topic.

Sources: